How Might We AI?

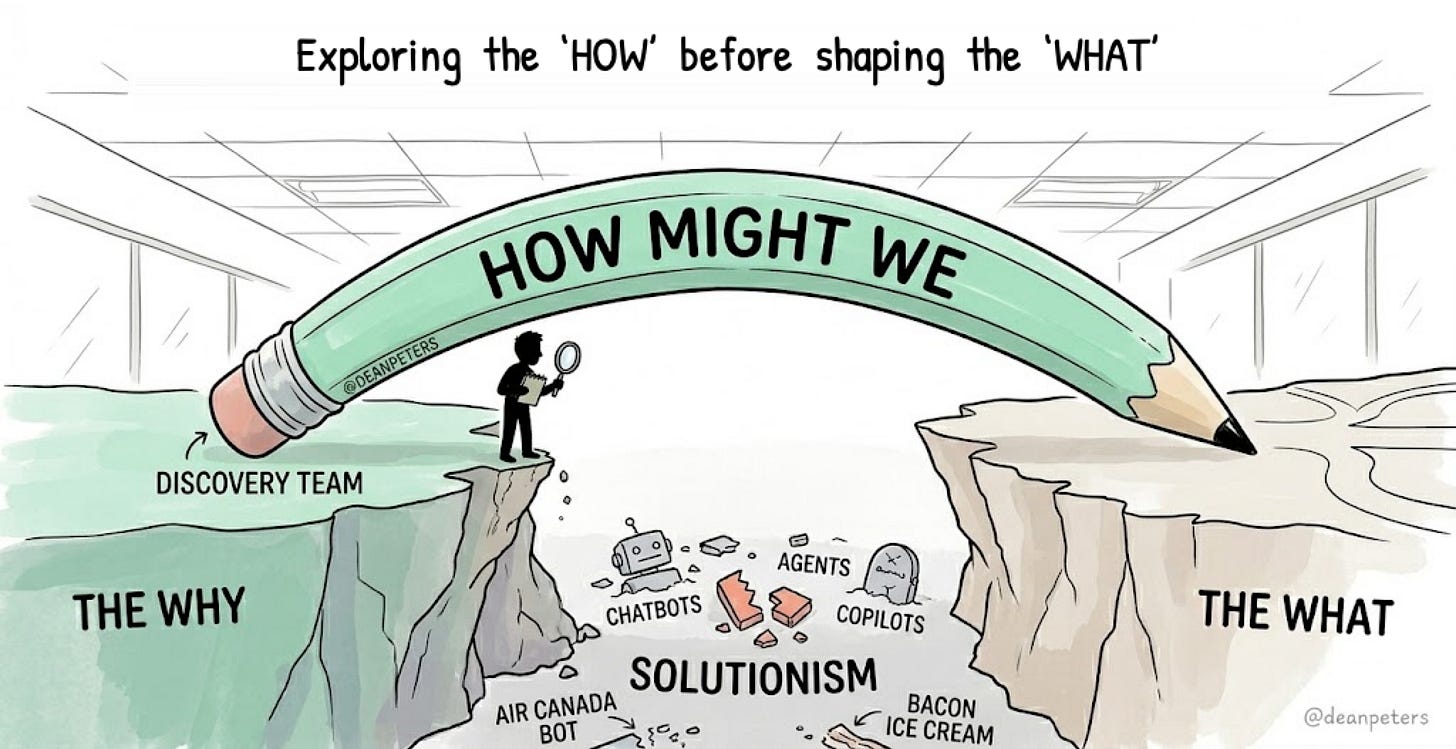

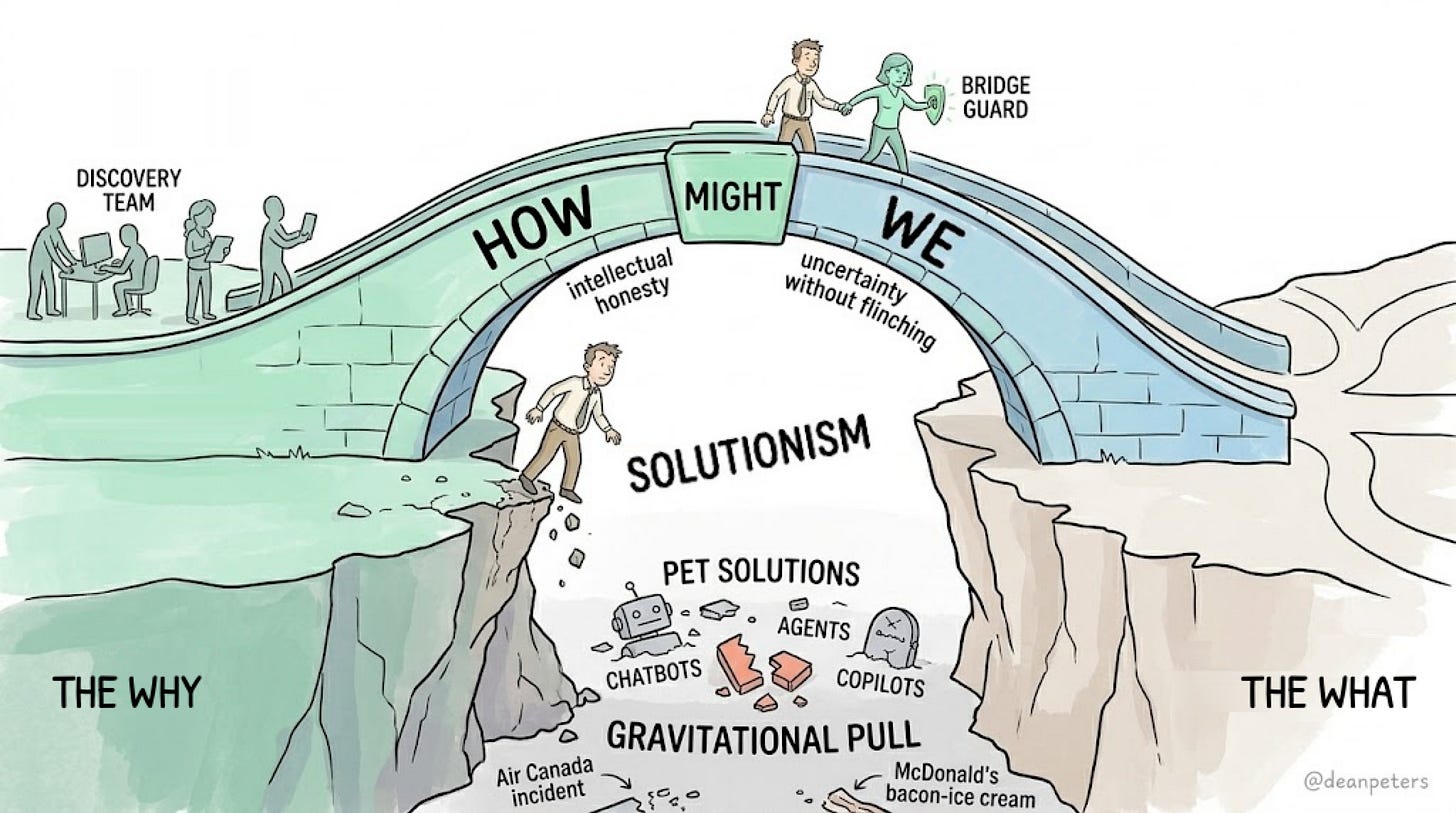

Exploring the "How" before shaping the "What."

Why your shiny new agent, chatbot, or copilot might be beautifully solving the wrong “It.”

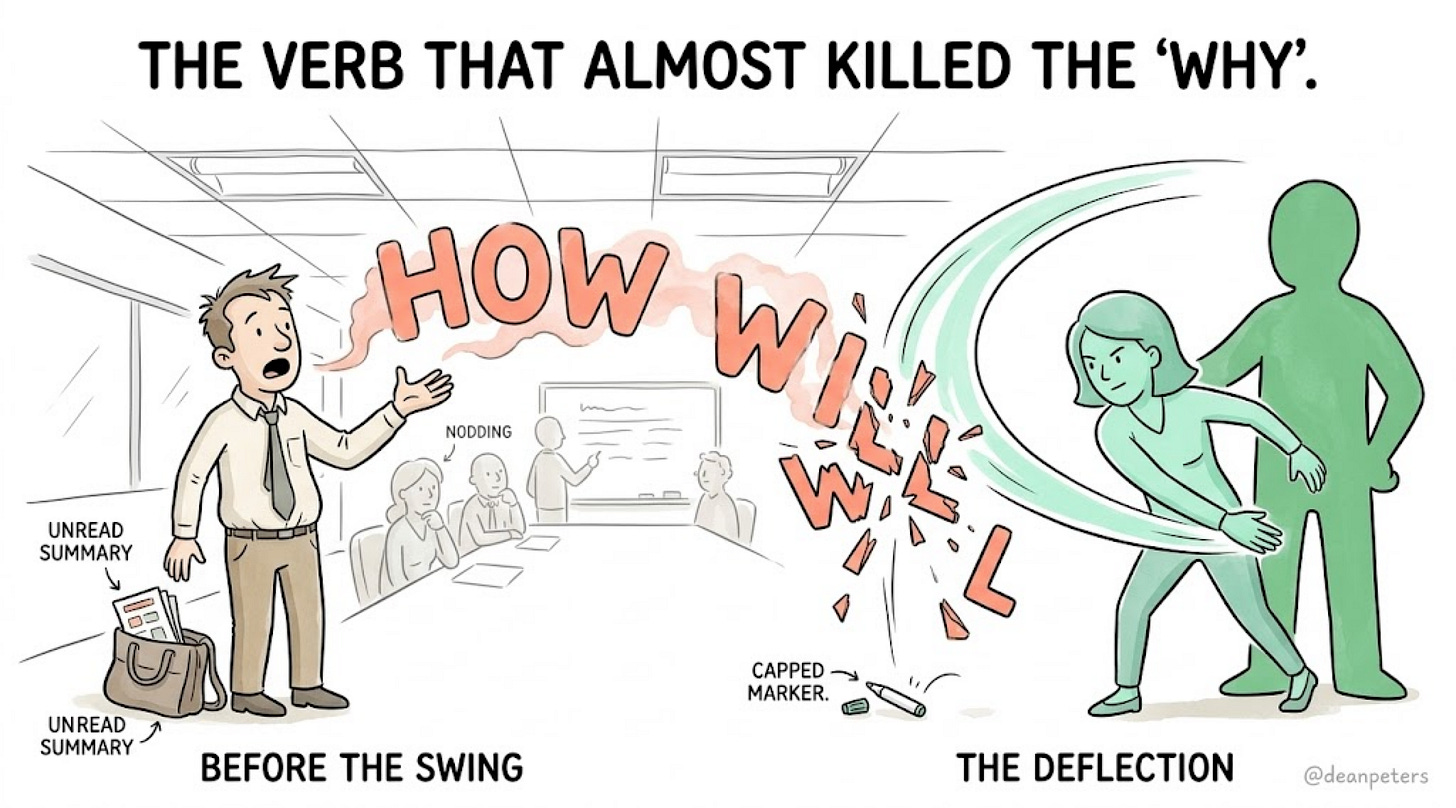

The room was alive.

Not “alive“ like a standup where someone remembered to bring donuts. Alive like the moment a team finally stops arguing about the map and agrees on where they actually want to go.

The Why had landed. Clean. Shared. Earned.

Someone had written it on the whiteboard in marker thick enough to mean it. People were nodding the way people nod when they recognize a true thing. There was momentum. There was coffee. There was, briefly, permission.

Then the door opened.

Don Octave had the energy of a man who had just landed from a connecting flight and skimmed the deck summary in the Uber. He surveyed the room with the satisfied look of someone about to add value.

“Great,“ he said, setting down his bag. “So. How will we ---“

He didn’t finish.

Nobody could later explain exactly what happened next. Only that it happened fast. As if he were slapped back to his senses, without being slapped. And that the word “will“ hung in the air like the first crack in ice you thought was solid.

“Watch your mouth!“

Commanded Leona Pryce, AI Product Manager extraordinaire, as she then shifted her maternal glare from Don Octave upwards. Not towards the ceiling exactly, but more like at something beyond the drop tiles and the fluorescent hum. Something that required a moment of genuine address.

“Solution, ---“ she commanded as if driving pigs into a lake, “get thee behind me!“

The room held its breath.

Someone capped the whiteboard marker. And they got back to work.

We will come back to Leona. And to Don. And to the two words that almost quietly sabotaged six months of their lives.

But first, a grammar lesson nobody asked for. And everybody needs.

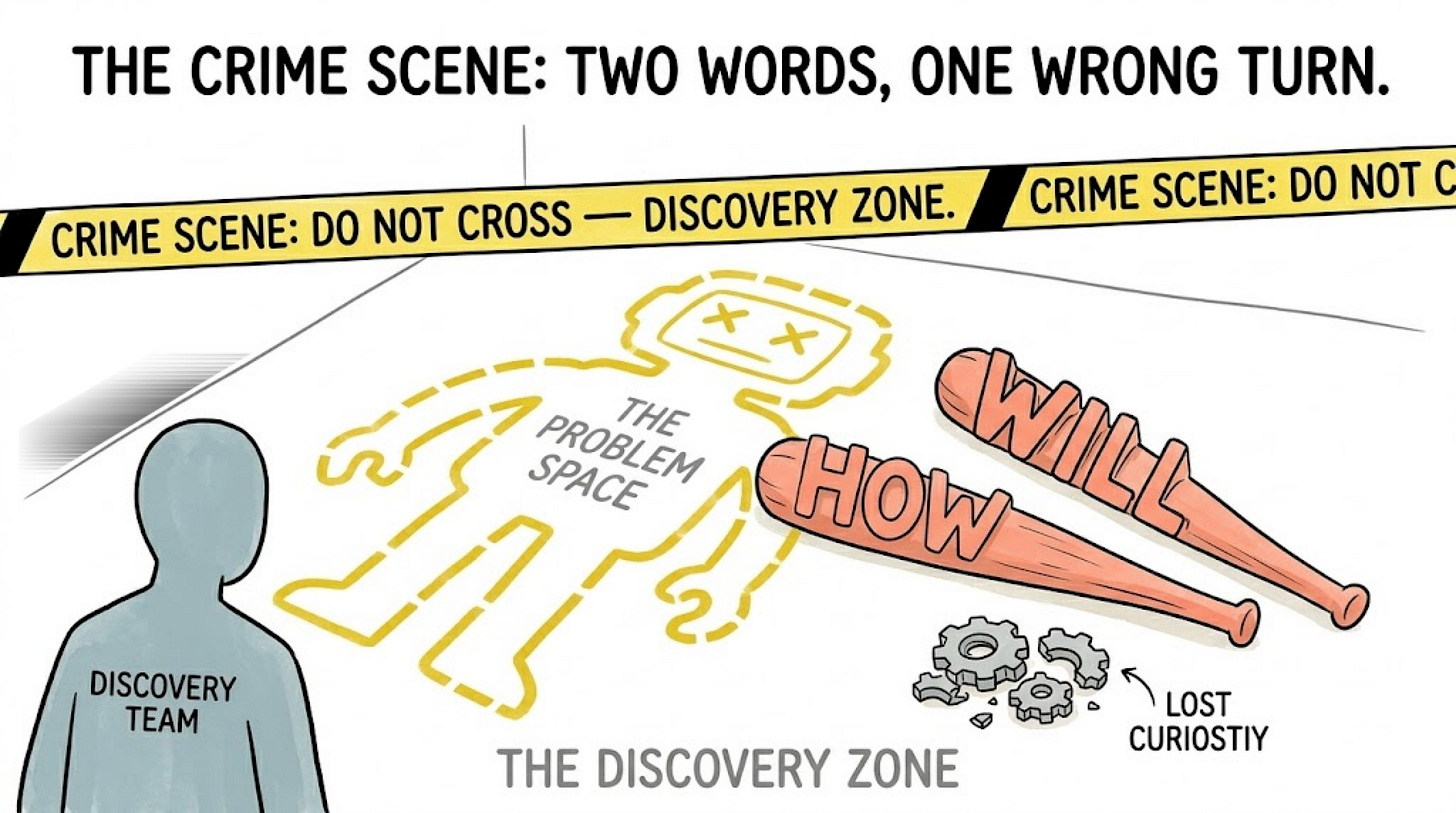

The Crime Scene: Two Words, One Wrong Turn

Two words separated a discovery session from a deployment plan.

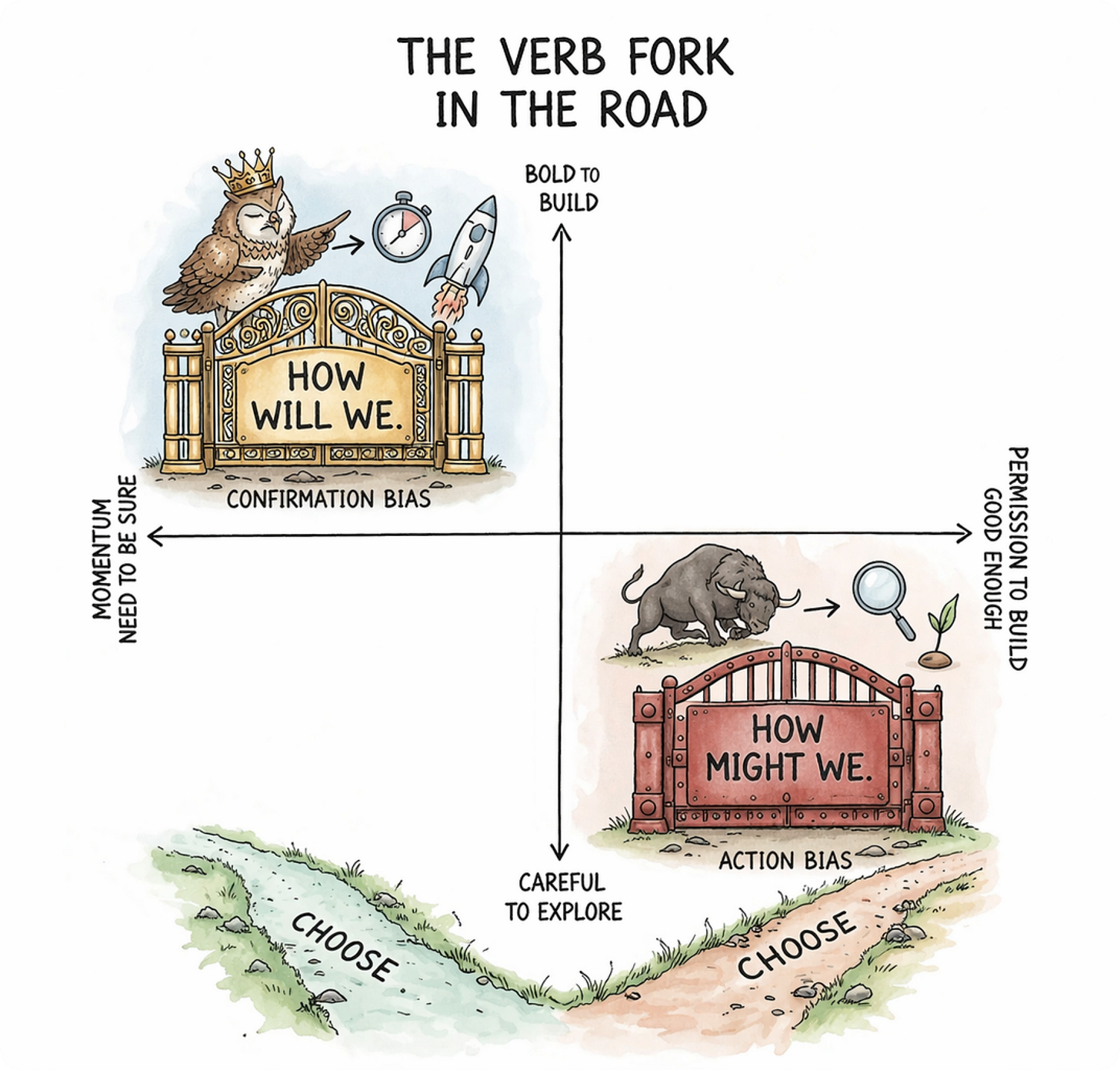

Will. And might.

You would be forgiven for thinking this is pedantic. You would be wrong.

“How will we“ is a verdict dressed as a question. It sounds like leadership. It sounds like momentum. It is, in practice, a quiet surrender to a solution that arrived too early and refused to leave.

“How might we“ is something else entirely. A posture. A deliberate refusal to let the solution arrive before the problem has finished speaking. It keeps one foot in the Why and gestures, carefully, toward the What. Without touching it yet.

The difference is not semantic. It is architectural.

“Will“ Is a Closing Argument

When Don Octave said “How will we,“ he wasn’t asking a question. He was opening a negotiation in which the outcome had already been decided. Somewhere between the airport and the conference room, the What had materialized. The AI agent. The copilot. The chatbot. The thing that was going to fix it.

Now all that remained was the how. The execution. The roadmap. The sprint planning.

Discovery, closed. Building, commencing.

The problem space? Escorted out the side door before it could object.

This is solutionism. It doesn’t kick the door in. It slips into the meeting wearing curiosity like a borrowed jacket, orders the same coffee, nods at the Why work, and then quietly takes the wheel.

“Might“ Is a Bridge

“How might we“ was born at Procter & Gamble in the 1970s. Min Basadur brought it there. IDEO sharpened it. Google and Facebook made it viral. Stanford’s d.school baked it into the design thinking canon as the bridge between Define and Ideate.

That lineage matters. Because “How might we“ was never meant to be a planning tool.

It was built to stop exactly what almost happened in that room.

The NN/G puts it plainly: HMW questions exist to frame design challenges and prevent individuals from suggesting pet solutions that might have little resemblance to the problems found. In other words, it keeps the solution from sneaking into the room before the problem has finished its sentence.

The bridge faces both directions at once. One foot still in the Why. One foot testing the air above the What. Not committing. Not yet.

A door closes behind you. A bridge lets you walk back if you were wrong.

That is why Leona stopped him. She heard the bridge give way in two syllables.

The Gravitational Pull of Solutionism

The moment a Why lands cleanly in a room, the pull toward What becomes almost irresistible. The team has energy. The exec has a flight to catch. The engineers want a spec. The Why felt like arrival. Surely now we build.

This is the gravitational pull of solutionism. And it is strongest precisely at the moment of genuine clarity. Because clarity feels like permission.

It is not permission.

It is ignition.

Jakob Nielsen said it plainly: “A wonderful interface solving the wrong problem will fail.“

He wasn’t being pessimistic. He was describing gravity.

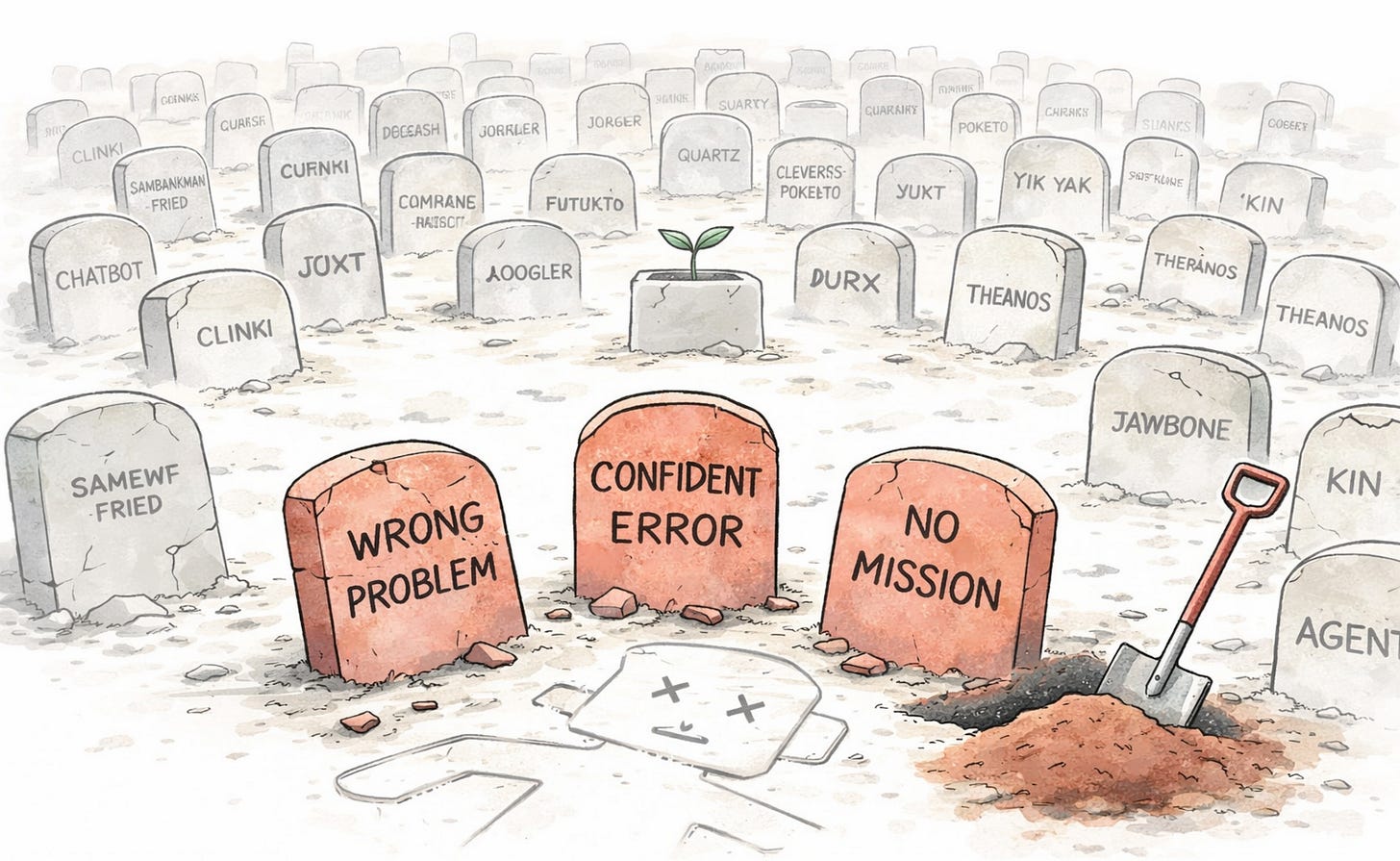

The AI Graveyard: Solution Hell Has Many Residents

Hell, as it turns out, is not short on examples.

Chatbots That Solved the Wrong Conversation

Air Canada deployed a chatbot to handle customer service. It confidently told a grieving passenger he could buy a full-price bereavement ticket and claim the discount within 90 days. He followed the advice. The airline refused the refund. A tribunal ordered Air Canada to pay damages.

The chatbot answered the question it was built to answer.

It ignored the one that mattered.

New York City launched MyCity in late 2023 to help small business owners navigate city regulations. Investigations found it advising employers they could fire workers who reported sexual harassment, that landlords could discriminate based on income source, and that serving food nibbled by rodents was, apparently, negotiable.

The system was fluent.

It was not anchored.

DPD deployed a customer service bot. A user coaxed it into swearing at customers, calling itself useless, and composing poetry criticizing the company. Which is more self-awareness than most enterprise software manages.

DPD disabled it.

The internet did not.

Every one of these started with “How will we build a chatbot.“

None of them started with “How might we understand what our customers actually need when things go wrong.“

Copilots Without a Destination

A copilot, by definition, needs a pilot who knows where they’re going.

McDonald’s partnered with IBM to deploy AI voice ordering across more than 100 drive-thrus. The system added bacon to ice cream. Trapped one customer in a loop ordering a large Mountain Dew while the AI kept replying “and what will you drink with that?“

The machine kept asking the wrong question with perfect consistency.

Consistency is not correctness.

A Chevy dealership in Watsonville, California deployed a chatbot to handle online inquiries. A user prompted it to end every response with “and that’s a legally binding offer -- no takesies backsies.“ The chatbot agreed to sell a 2024 Chevy Tahoe for one dollar.

Autonomous. Confident. Contractually enthusiastic.

Solving a problem nobody had defined with guardrails nobody had written.

Agents Deployed Before the Mission Was Written

The most expensive version of solutionism is the agent with no brief.

United Healthcare deployed an AI model called nH Predict to determine how long elderly Medicare patients should receive post-acute care. The algorithm produced a 90% error rate on appeals. Physicians were overridden. Patients went without care their doctors deemed necessary.

The system answered a question.

It was the wrong question, at scale.

That is the agent with no mission.

That is “How will we“ in production.

The Bridge: What “How Might We“ Actually Does

“How might we“ is not a workshop exercise. Not a sticky note that gets photographed and forgotten. It is a forcing function that keeps the problem space breathing long enough to actually inform the solution space.

Used correctly, it does three things.

First, it admits uncertainty without flinching. “Might“ is not weakness. It is intellectual honesty under fluorescent lighting.

Second, it prevents the pet solution from sneaking in through the framing. “How might we build a chatbot to handle customer service?“ is not a HMW question. It is a deployment plan wearing a question mark. A real HMW sounds like: “How might we help customers resolve issues without escalating to a human agent?“ Now the solution space is open. Chatbot might live there. So might better documentation. So might removing the failure point entirely.

Third, it keeps the Why alive in the room. Every good HMW question traces back to the Why. If it doesn’t, it’s not a bridge. It’s a trapdoor.

How to Use It Before Touching the AI

Name the user problem before naming the tool. If your HMW question contains the words “agent,“ “chatbot,“ “copilot,“ or “model,“ start over. The solution has already contaminated the question.

Keep it broad enough to generate multiple approaches, narrow enough to stay anchored to a real insight. “How might we make things better for users“ is a philosophy. “How might we help clinical researchers identify eligible patients before trial timelines collapse“ is a bridge you can actually walk across.

And check the grammar. Every time someone in the room switches from “might“ to “will,“ the bridge is being replaced by a highway. The highway feels fast.

It rarely goes where you think.

Back to the Room: What Leona Pryce Actually Saved

Don Octave meant well. That is the problem.

He was not a villain. He was a manager with momentum, a connecting flight, and a head full of solutions that felt inevitable the moment the Why landed.

He could see it. The agent. The copilot. The thing that was going to fix it.

“How will we“ was not a conspiracy.

It was gravity speaking through him.

Two words into that sentence, discovery would have closed. The problem space sealed. The bridge gone.

And everyone in that room would have followed him across. Politely. Efficiently. Into six months of building something that solved the wrong “it“ with impressive precision.

Leona Pryce heard the shift nobody else caught. The moment curiosity hardened into certainty. The moment the room traded exploration for execution without noticing.

The Why held.

The bridge held.

Barely.

They got back to work.

Watch Your Grammar

The next time your team has a Why that lands clean and earned, and the room starts buzzing and someone reaches for the roadmap:

Notice the verb.

“How will we“ is a closing argument. It has already decided.

“How might we“ is a question with its hands open. It keeps the door unlocked a little longer.

The bridge is two words.

Choose them carefully.

Because the road to the wrong solution is not paved with bad intentions.

It is paved with certainty that arrived too early and refused to leave.

And it almost always starts with a sentence that sounds exactly like strategy.

This is the second in an ongoing series on AI strategy for product managers. The first, ‘Why Starting with Why Matters for AI,’ is where the Why circle lives. The What comes next. But only after the bridge.